Add residual to latent diffusion first stage training and train with danbooru dataset. Now it can encode 256x256x3 pictures to eight ordered 16x16x4 latent codes. I don't know if this first stage model generated latent codes can be used in diffusion. https://t.co/5NFcO64qDK

Tired of Colab K80. So I create a repo of PaddlePaddle version of ruDalle. The weights were converted in order to use the free v100 inference.

Github: https://t.co/jW9iYGOTpf

Free v100 on AI Studio: https://t.co/Lc48Kr9mPW (Warning: This is a Chinese community)

Recently, I saw some papers on the separation of image colors and sketches, so I sorted out an idea that I had two years ago, and opened it up: https://t.co/vX3jgtZZ1d

It's a neural network model for extracting regions from an image. The size of this simple model is just 600KB.

@ak92501 It seems to coincide with my idea, but mine is the coloring of any anime line drafts. The effect of this article looks a bit poor. Encoder of the line draft should not be needed. Just merge the line draft and noise together as input.

https://t.co/mOJWWIeANK

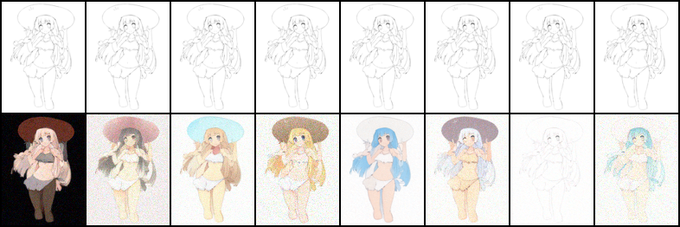

I thought the colorized results were not good on real datas, but I was wrong. Today I found some real anime-sketch pairs datas for comparison. The colorized results of this model is truly amazing to me.

Results in https://t.co/2n9I8EQcrp with `sample-chaosinism*` prefix