BigGAN.のTwitterイラスト検索結果。 3 件

"A cloud made of [dogs / eyeballs / love / water]."

Fascinating to use #CLIP from @OpenAI to steer #BigGAN. The text on these images are the prompts I used to guide BigGAN to the image.

Huge thanks to @advadnoun for code/Colab and @AmysImaginarium for inspiration! 🙏

Thread ⬇️

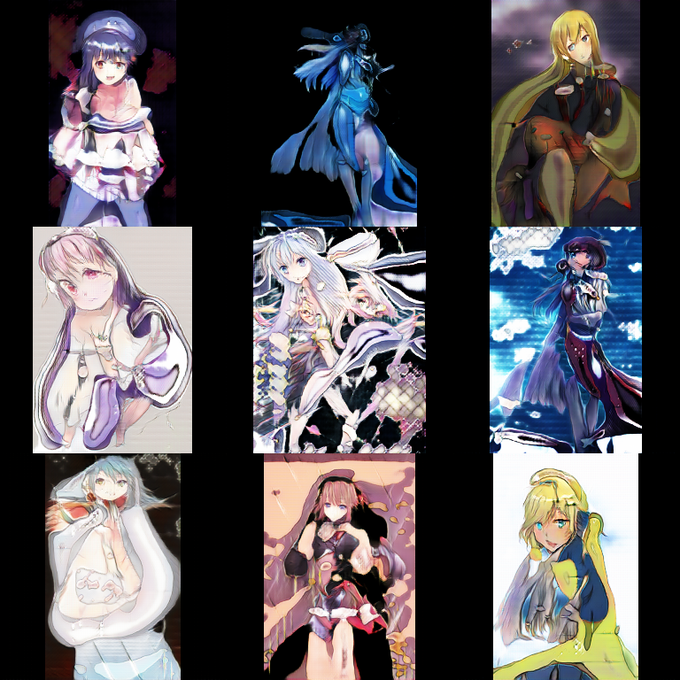

After all, StyleGAN's architecture is quite similar to BigGAN. So it must be due to one of the "bells and whistles" that StyleGAN comes with.

I'll just give the answer: Style mixing on (left, step 26k), style mixing off (right, 24k). Note how much more cohesive the bodies are.

Following up, another interpolation video of the same model much later in training. It really seems that, despite this one being trained on random cropping, StyleGAN2 prefers generating centrally-placed objects (especially evident in comparison with BigGAN...) https://t.co/XQwTEb7NbY